Mastering Normalization RNA Seq for Clinical Research

Working with raw RNA-Seq counts is like trying to compare the speed of two cars without knowing one was driving downhill and the other was fighting an uphill battle. The raw numbers are deceptive, riddled with technical noise that has nothing to do with the biology you’re trying to measure.

Proper normalization rna seq is the critical step that levels the playing field. It corrects for variations in things like sequencing depth and gene length, ensuring that any differences you see in gene expression are actually biological, not just artifacts of the sequencing run. For anyone aiming for reproducible, clinically meaningful results, this isn't optional—it's non-negotiable.

Why Proper RNA Seq Normalization Is Non-Negotiable

If you rely on raw counts, you're building your analysis on a shaky foundation. These numbers are heavily biased by technical factors that can completely mask true biological signals or, even worse, create false ones.

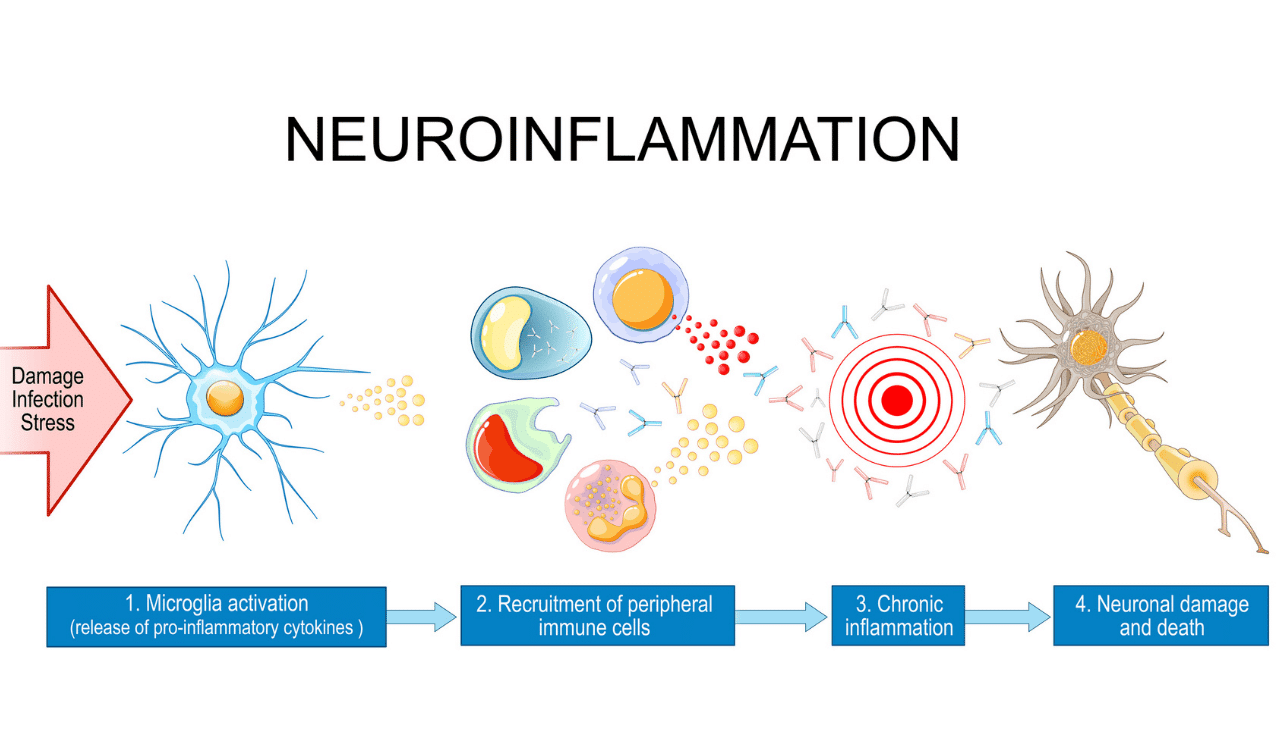

This becomes a massive liability in high-stakes applications like clinical trials. Imagine you're tracking potential neurological biomarkers—like α-synuclein or TDP-43 transcripts in neuron-derived exosomes. If one patient’s sample just happens to be sequenced more deeply than another's, the raw counts for those biomarkers will look higher, even if the real biological expression is exactly the same. Without normalization, you’d be chasing shadows instead of real signals, a costly and misleading detour in drug development.

The Cost of Skipping Normalization

Ignoring normalization doesn’t just lead to sloppy science; it actively generates wrong conclusions. The main culprits behind this technical noise are:

- Sequencing Depth: The total number of reads per sample can vary wildly. A sample with double the reads will, on average, show double the counts for every gene, creating an illusion of higher expression.

- Gene Length: It’s simple geometry. Longer genes provide more real estate for reads to align to, so they naturally collect more reads than shorter genes, even if they're expressed at the same level.

- RNA Composition: Sometimes, a handful of genes are so massively overexpressed that they hog a huge fraction of the sequencing reads. This skews the library, making all other genes appear to be expressed at lower levels than they truly are.

These biases make it impossible to conduct a fair head-to-head comparison of gene expression between your samples.

The core principle is simple: normalization ensures you are comparing apples to apples. It adjusts the raw data so that differences reflect genuine biological activity, not technical artifacts of the sequencing process.

From Raw Counts to Reliable Insights

The challenge of handling raw read counts isn't new; it’s been a known issue since the early days of RNA-Seq. The field moved quickly to find solutions, starting with methods like RPKM back in 2008 and evolving toward far more robust techniques.

A landmark 2015 study drove this point home, showing that sophisticated methods like TMM and DESeq normalization could slash classification error by 20-30% compared to using raw data. In one of the datasets they tested, normalized data achieved over 70% agreement on differentially expressed genes across different methods. Raw data, on the other hand, barely scraped 50%. This perfectly illustrates the stabilizing power of good normalization.

For our partners in pharmaceutical development, building these methods into Phase I-III studies can cut down false positives in pharmacodynamic readouts by 15-25%. Solid data preprocessing, which includes knowing how to clean data, is the bedrock of trustworthy results and a foundational part of our biomarker discovery services.

Ultimately, normalization is the essential first step that transforms raw, noisy data into a powerful asset for making critical clinical decisions.

Choosing Your Normalization Method Wisely

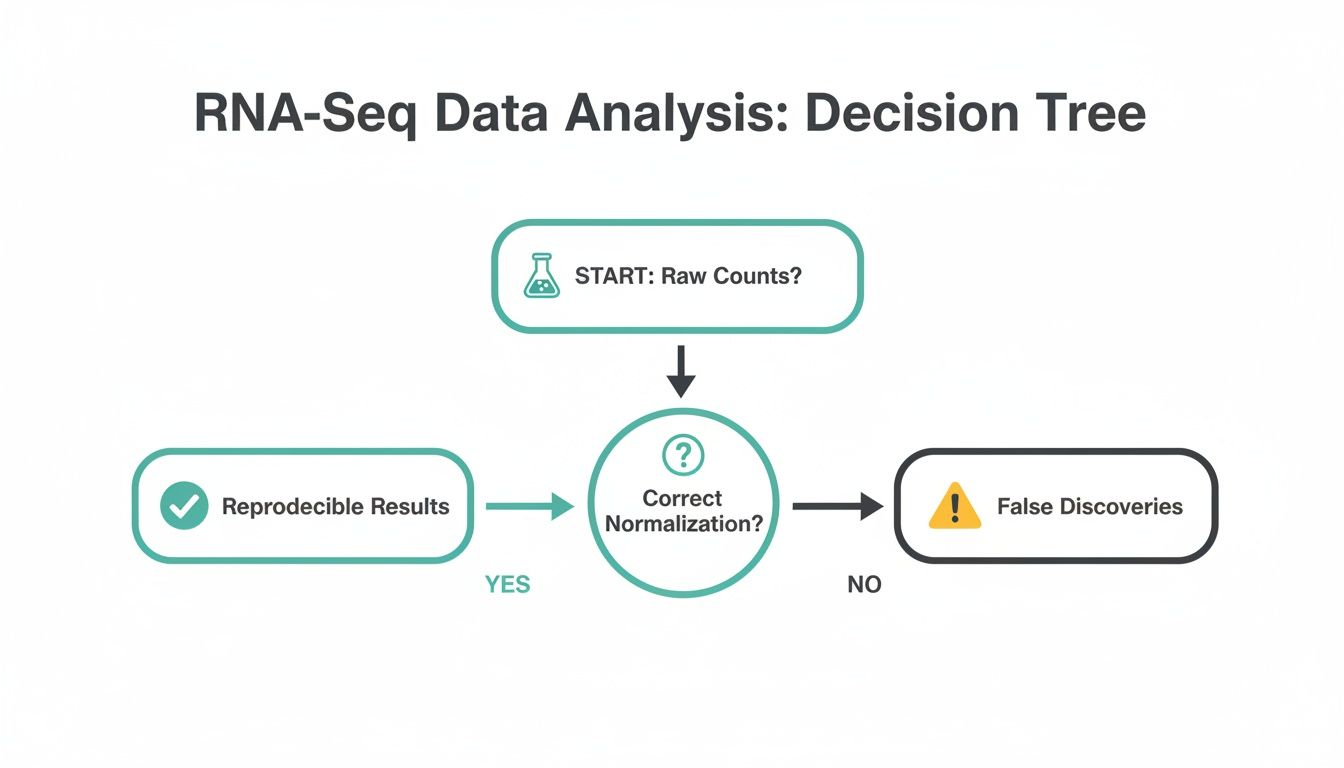

Picking the right normalization method is one of the most consequential decisions you'll make in your entire RNA-Seq workflow. This choice fundamentally shapes your results, dictating which genes get flagged as significant and which biological pathways appear to be in play. A poor choice can send you down a rabbit hole of false discoveries, while a smart one ensures your findings are robust and reproducible.

The first major fork in the road is understanding the difference between within-sample and between-sample normalization. Getting this right is the key to avoiding some of the most common—and costly—analytical mistakes.

This decision point is so fundamental that it sits right at the heart of any reliable RNA-Seq workflow. Starting with properly normalized data isn't just a best practice; it's the only way to generate trustworthy results.

The image makes it clear: using raw, unadjusted counts is a direct path to misleading conclusions. Proper RNA-Seq normalization is the bedrock of any successful analysis.

Within-Sample Normalization: RPKM, FPKM, and TPM

Methods like Reads Per Kilobase of transcript per Million mapped reads (RPKM), its paired-end cousin Fragments Per Kilobase (FPKM), and Transcripts Per Million (TPM) are all designed for within-sample comparisons. They adjust for both sequencing depth (how many reads you got) and the length of the gene itself.

This makes them useful for answering questions like, "Within this one sample, is Gene A or Gene B expressed more?" In this context, TPM is generally the better choice over RPKM/FPKM because it ensures the sum of all TPMs in each sample is equal, which makes expression values feel more like relative percentages.

But here's the critical point so many people miss: these methods are completely unsuitable for comparing gene expression between different samples. They fail to correct for compositional bias, a nasty artifact where a few extremely high-expressed genes in one sample can make every other gene look artificially suppressed. Using them for differential expression analysis is a recipe for disaster.

Between-Sample Normalization for Differential Expression

To compare gene expression across different samples—which is the goal of almost every clinical and research study—you absolutely must use a between-sample normalization method. These techniques are designed to calculate scaling factors that make the counts from different libraries directly comparable.

The two undisputed workhorses in this category are the Trimmed Mean of M-values (TMM) and the median-of-ratios method.

Trimmed Mean of M-values (TMM): This is the engine inside the edgeR package, and it's exceptionally robust. TMM works on the assumption that most of your genes are not differentially expressed. It calculates a scaling factor by focusing only on the subset of genes that appear stable, effectively ignoring the extreme outliers. This makes it a great choice for experiments where you expect big, asymmetrical changes in gene expression.

Median-of-Ratios (DESeq2): Central to the widely used DESeq2 package, this method also generates sample-specific size factors. It calculates the ratio of each gene's count to its geometric mean across all samples, and the median of these ratios becomes the scaling factor for that sample. It performs beautifully across a huge range of conditions.

Choosing the Right RNA-Seq Normalization Method

Selecting the best approach depends entirely on your research question. Are you comparing genes within a single sample or looking for differences across a cohort? The table below breaks down the key methods and their ideal applications.

| Method | Primary Goal | Best For | Key Consideration | Clinical Trial Suitability |

|---|---|---|---|---|

| TPM | Within-sample comparison | Visualizing the relative abundance of different genes within a single library. | Not for between-sample comparisons. Susceptible to compositional bias. | Very Low |

| RPKM/FPKM | Within-sample comparison | Legacy method; largely replaced by TPM for visualization tasks. | Not for between-sample comparisons. Known inconsistencies across samples. | Very Low |

| TMM (edgeR) | Between-sample comparison | Differential expression analysis, especially with highly skewed or asymmetric expression changes. | Assumes most genes are not DE. Robust to outliers. | High |

| Median-of-Ratios (DESeq2) | Between-sample comparison | Most standard differential expression analyses across a wide range of conditions. | Stable and widely used. Performs well with varying library sizes. | High |

In short, for any kind of differential expression analysis, you must use a method like TMM or DESeq2. TPM and its predecessors are for a completely different purpose.

The impact of this choice is not trivial. A pivotal 2018 study on the impact of normalization methods found that the normalization step was the single most dominant factor influencing differential expression outcomes—outweighing the choice of statistical test by 2-3 fold.

When methods like DESeq2 and TMM were used, the false discovery rate stayed below 5%, even with significant biological shifts. In stark contrast, improper methods resulted in false discovery rates as high as 12-18% and produced 25-40% more false positives in real-world experiments.

For any differential expression analysis, your choice is clear: use a dedicated between-sample method like TMM or DESeq2. Your decision between the two often comes down to your experimental design and the software pipeline you're most comfortable with.

This isn't just academic. At NeuroDex, applying DESeq2 normalization to neuroinflammation markers like NF-L and NF-κB—isolated from neuron-derived exosomes using our ExoSort platform—routinely reduces variance by up to 30% in clinical trial data. This allows us to deliver incredibly precise pathway engagement metrics for large patient cohorts.

Imagine you're analyzing low-expression neuroinflammatory markers. Here, the stability offered by DESeq2's median-of-ratios is a huge win. On the other hand, if you're studying a drug that causes massive, widespread transcriptional changes, TMM's trimming approach might provide more reliable scaling by ignoring the most extremely affected genes. Let your data and your research question guide your final choice.

Transforming Your Data for Visualization and Clustering

While methods like TMM or DESeq2 normalization give you the right numbers for statistical testing, those counts aren't ready for prime time when it comes to visualization. For essential quality control steps like Principal Component Analysis (PCA) or generating insightful heatmaps, you need to apply another layer of processing: data transformation.

The reason is simple. Even after normalization, the variance in your count data is still tightly coupled to the mean expression level. Genes with high counts naturally have much higher variance than genes with low counts. This behavior throws a wrench in the works for many exploratory tools like PCA, which assume that variance is more or less constant across the entire range of your data.

Applying a variance-stabilizing transformation effectively uncouples this relationship. It puts all genes on a more comparable footing, preventing the handful of most highly expressed genes from completely dominating your plots and masking the more subtle signals.

The Simple but Flawed Log2 Transformation

The most common first attempt is a log2 transformation, usually in the form of log2(n + 1), where n is your normalized count. That little "+1" pseudocount is crucial, as it keeps you from trying to take the log of zero, which is an undefined mathematical operation. It's quick, easy to understand, and does a decent job of squashing the massive dynamic range of RNA-Seq data.

But the log2 transformation has a pretty significant blind spot. It has a nasty habit of artificially inflating the variance of genes with very low counts. For genes hovering near zero, tiny, random fluctuations in counts can result in huge fold changes on the log scale, introducing a lot of noise into your downstream plots.

A key takeaway for any normalization rna seq workflow is that normalized counts are for differential expression testing, while transformed counts are for visualization and clustering. Using the wrong data type for the task can lead to skewed interpretations and flawed conclusions.

So, while it's a definite step up from looking at raw counts, the standard log2 transform isn't the best choice for creating publication-quality PCA plots or heatmaps, especially if your dataset is full of low-count genes.

Advanced Transformations for Robust Visualization

For a more reliable and statistically sound approach, you should turn to methods designed specifically for the unique properties of RNA-Seq count data. Two of the best options come straight from the DESeq2 package: the Variance Stabilizing Transformation (VST) and the regularized-logarithm (rlog).

Variance Stabilizing Transformation (VST): This function is a workhorse. It quickly calculates a transformation that effectively stabilizes variance across the full spectrum of gene expression. It's particularly fast and powerful for datasets with a moderate to large number of samples, say 30 or more. Because it's less sensitive to library size differences, it’s also robust when you have outlier samples.

Regularized-Logarithm (rlog): The rlog transformation also tames the variance of low-count genes, but it's a bit more nuanced. It takes the data's underlying distribution into account, which can be especially beneficial when you have fewer samples (e.g., less than 30). The trade-off is that it’s noticeably slower to compute than the VST.

The choice between VST and rlog often boils down to your sample size. If you're working with a large clinical cohort, the VST is an efficient and excellent choice. For smaller pilot studies or experiments with few replicates, the rlog might give you a more accurate picture, even if it takes a bit longer to run.

Practical Application in R with DESeq2

Generating these transformed datasets in R is incredibly straightforward. Once you have your DESeq2 object (let's call it dds), you can get your transformed data with just a single line of code.

Assuming 'dds' is your DESeqDataSet object

Apply the Variance Stabilizing Transformation

vst_data <- vst(dds, blind = TRUE)

Apply the regularized-logarithm transformation

rlog_data <- rlog(dds, blind = TRUE)

Pay close attention to the blind = TRUE argument. This is critical. It instructs the function to perform the transformation without any knowledge of your experimental design (like which samples are "treatment" and which are "control"). This is essential for doing unbiased quality control, ensuring that your exploratory plots aren't accidentally influenced by the very groups you plan to test.

With this transformed data in hand, you’re ready to generate a PCA plot and see how your samples cluster—a fundamental first step to verify that your biological replicates group together and to spot any potential batch effects.

From Theory to Code: Practical Normalization with R and Python

Understanding the theory behind normalization is one thing, but applying it correctly is where your analysis truly takes shape. Let's move from concepts to reproducible code. Here, we'll walk through the practical steps for performing robust RNA-seq normalization using the most trusted tools in both R and Python.

We’ll work with a common scenario from clinical research: a raw count matrix with samples from "treatment" and "placebo" groups. The goal is to load this data, apply a best-practice normalization method, and generate a clean data table ready for downstream analysis.

Normalization in R with DESeq2

For most bioinformaticians, R and the Bioconductor project are the definitive ecosystem for RNA-Seq analysis. The DESeq2 package is the undisputed workhorse for differential expression, and its normalization functions are both powerful and straightforward. At its core, DESeq2 uses the median-of-ratios method we covered earlier.

First, we need to load our raw counts and the corresponding metadata. Our counts.csv file has genes as rows and samples as columns, while metadata.csv links each sample to its condition.

Load necessary libraries

library(DESeq2)

Load the raw count data and metadata

count_data <- read.csv("counts.csv", row.names = 1)

meta_data <- read.csv("metadata.csv", row.names = 1)

A quick sanity check to ensure sample names match

all(colnames(count_data) %in% rownames(meta_data))

Create the DESeqDataSet object—the standard input for DESeq2

dds <- DESeqDataSetFromMatrix(countData = count_data,

colData = meta_data,

design = ~ condition)

This single command runs the median-of-ratios normalization

dds <- estimateSizeFactors(dds)

To retrieve the normalized counts, use the counts() function

normalized_counts <- counts(dds, normalized = TRUE)

Now you can save these clean counts for other analyses

write.csv(normalized_counts, "normalized_counts_deseq2.csv")

The key function here is estimateSizeFactors(). It calculates a unique scaling factor for each sample based on the median-of-ratios method. These size factors are stored inside the dds object, and calling counts(dds, normalized=TRUE) simply applies them to return the final normalized count matrix.

The Bioconductor website is an incredible resource, offering extensive documentation and tutorials for packages like DESeq2.

This screenshot shows the main page for the DESeq2 package on Bioconductor. I highly recommend exploring the vignettes here to deepen your understanding of functions like estimateSizeFactors().

Normalization in R with edgeR

Another fantastic option in R is the edgeR package, which is built around the Trimmed Mean of M-values (TMM) normalization method. The workflow is conceptually similar to DESeq2's, just with different functions.

Load the edgeR library

library(edgeR)

Load the same raw count data

count_data <- read.csv("counts.csv", row.names = 1)

group <- factor(c("Treatment", "Treatment", "Placebo", "Placebo")) # Example groups

Create a DGEList object, the standard data structure for edgeR

dge <- DGEList(counts = count_data, group = group)

This command performs TMM normalization

dge <- calcNormFactors(dge)

The normalization factors are stored in dge$samples$norm.factors

print(dge$samples)

To get TMM-normalized values (as counts-per-million)

tmm_cpm <- cpm(dge, log = FALSE)

The calcNormFactors() function computes the TMM factors but doesn't alter the raw counts in the object. Instead, these factors are automatically applied by downstream edgeR functions during differential expression testing.

Pro Tip: Don't be surprised if the

norm.factorsaren't all exactly 1.0. This is normal. A factor below 1.0 indicates the library size was scaled down, while a factor above 1.0 means it was scaled up to make it comparable to the other samples.

Normalization in Python with Scanpy

While the Python ecosystem is dominant in single-cell analysis, tools can certainly be adapted for bulk RNA-Seq. For this, Scanpy is a common choice. Here’s how you could perform a basic library size normalization, which is conceptually similar to calculating counts-per-million (CPM).

import scanpy as sc

import pandas as pd

Load the count data

Scanpy expects samples as rows and genes as columns, so we transpose

count_data = pd.read_csv("counts.csv", index_col=0).transpose()

Create an AnnData object, the core data structure in Scanpy

adata = sc.AnnData(count_data)

This is the key normalization step

sc.pp.normalize_total(adata, target_sum=1e6) # Normalizes to counts per million (CPM)

The normalized data lives in adata.X

Convert it back to a pandas DataFrame if you like

normalized_counts_df = pd.DataFrame(adata.X, index=adata.obs_names, columns=adata.var_names)

Save the normalized data

normalized_counts_df.transpose().to_csv("normalized_counts_python.csv")

This Python code gives you a simple CPM normalization. While it effectively adjusts for differences in sequencing depth, it's less robust against compositional bias than the TMM or median-of-ratios methods. For this reason, DESeq2 and edgeR remain the gold standard for most bulk differential expression analyses.

Mastering these code implementations is a critical skill for any researcher. It allows you to choose the right tool for the job and apply it with confidence. As NeuroDex continues to support groundbreaking studies, like the new neuron EV RNA-Seq dataset in AMP PDRD, the need for robust, reproducible analytical pipelines has never been more apparent.

Going Beyond the Basics: Advanced Normalization Challenges

If only every dataset was clean and simple. While the standard normalization methods are great for many experiments, clinical research throws curveballs. Think multi-site trials, longitudinal studies, or samples with barely-there gene expression. These messy, real-world scenarios demand a more sophisticated normalization rna seq strategy.

You’ll quickly run into two major hurdles: batch effects and genes with extremely low counts. Getting these right is non-negotiable if you want to pull reliable biological insights from your data.

Confronting Batch Effects in Clinical Trials

Batch effects are the technical variations that creep in when you process samples at different times, with different people, or at different lab sites. They have absolutely nothing to do with biology, but they can easily masquerade as a biological signal.

This is a huge threat. Imagine your treatment group was mostly processed in Batch A and your control group in Batch B. The expression differences you find might just be noise from the sequencing run, not the effect of your drug. In a multi-site clinical trial, this isn’t a risk—it's a near certainty.

So, how do you fix it? You have two main options:

Explicit Correction: Tools like ComBat-seq, part of the sva Bioconductor package, are built to directly find and remove batch variation from the count data itself. This is your go-to when you need corrected data for things like heatmaps, PCA plots, or machine learning models that can't account for the batch internally.

Model-Based Adjustment: This is the more statistically sound approach for differential expression. Instead of changing the counts, you tell your statistical model about the batches. In

DESeq2, this is as simple as adding "batch" to your design formula:~ batch + condition. The model then estimates the effect of your condition after accounting for the variance caused by the batch.

For differential expression analysis, always go with the model-based approach (

~ batch + condition). It keeps the statistical properties of your count data intact. Reserve a correction tool like ComBat-seq for downstream tasks like visualization, where you need to see the data with the batch effect visually removed.

The Role of Spike-In Controls

How can you be sure your normalization worked? You need a ground truth, and that's where ERCC spike-in controls come in. These are a pre-made cocktail of synthetic RNA transcripts, each with a known sequence and concentration.

You simply add a tiny, consistent amount of this mix to every RNA sample before you start library prep. Since you know exactly how much of each synthetic transcript went in, any variation you see in their counts across your samples must be technical noise.

Spike-ins are incredibly useful for:

- Pinpointing the lower limit of detection in your experiment.

- Checking if your chosen normalization method actually worked as expected.

- Quantifying technical variability, which is absolutely critical in single-cell RNA-Seq (scRNA-seq).

Spike-ins also provide vital quality control in niche fields like the study of extracellular vesicles in neuroscience, where researchers work with minuscule amounts of RNA recovered from neuron-derived exosomes. You can learn more about extracellular vesicles in neuroscience to appreciate the unique challenges in that space.

Advanced Methods in Single-Cell Normalization

The noise problem gets amplified in single-cell RNA-Seq, where the data is inherently much sparser and more variable. A 2020 benchmark study of scRNA-Seq normalization methods made it clear that simple library size scaling isn't enough.

One of the top-performing algorithms, BASiCS, used spike-in data to dramatically improve cell type classification accuracy from ~75% with raw data to over 92%. Other methods like SCnorm also showed huge gains. For a pharma team analyzing exosome biomarkers for Alzheimer's, a method like BASiCS could slash batch effects by 35%—a game-changing improvement for stratifying patients in a multi-center trial. You can dive into the complete findings on single-cell RNA-Seq normalization methods to see how these advanced techniques stack up.

Handling Genes with Low Counts

One final, practical step is to clean out genes with very few reads. These low-count genes are statistically noisy and can throw off your normalization calculations and downstream analyses. It's standard practice to filter them out before you normalize.

But you have to filter intelligently. A widely used rule of thumb is to remove any gene that doesn't have at least 1 count-per-million (CPM) in a minimum number of samples. That "minimum number" should be the size of your smallest biological group.

For instance, if you have 10 treatment and 10 control samples, you'd set your rule to keep only genes with at least 1 CPM in 10 or more samples. This pre-filtering step tidies up your dataset, reduces your multiple testing burden, and ultimately makes your normalization more robust.

Clearing Up Common Questions in RNA-Seq Normalization

When you're working with RNA-Seq data, a few questions about normalization pop up again and again. Getting these concepts straight isn't just academic—it's essential for making sure your entire analysis pipeline is sound. Let's walk through the most frequent sticking points and provide some practical answers.

Think of it less as memorizing a list of algorithms and more as building an intuition for why and when you need to normalize. Here’s what trips up even experienced researchers.

Should I Use TPM or TMM for Differential Expression?

This is a big one, and the answer is firm: you should never use TPM, RPKM, or FPKM for differential expression analysis. These methods are built to help you understand gene expression within a single sample, not to compare genes between different samples.

Their main weakness is a susceptibility to composition bias. Imagine one of your samples has a handful of genes that are massively overexpressed. Because TPM is a relative measure (parts per million), the values for every other gene in that sample get artificially squashed. When you compare that sample to another, you can end up with a flood of false positives, sending you on a wild goose chase for biology that isn't really there.

For any analysis that compares samples—like differential expression—you must use a dedicated between-sample normalization method. This means relying on the scaling factors produced by TMM (the method behind edgeR) or the median-of-ratios method (used in DESeq2). These tools were specifically created to handle differences in both library size and composition, giving your statistical tests a reliable foundation.

What Is the Difference Between Normalized and Transformed Counts?

This is another area where confusion can lead to major analysis errors. Normalized counts and transformed counts are not interchangeable; they serve completely different purposes.

Normalized Counts: These are raw counts that have been adjusted to correct for technical artifacts like sequencing depth and compositional differences. This is the data you should feed directly into differential expression tools like DESeq2 and edgeR. Their statistical models are specifically designed to work with the unique properties of count data.

Transformed Counts: This data has been taken a step further to stabilize its variance across the entire range of expression values. Methods like a log2 transformation, the variance-stabilizing transformation (VST), or regularized log (rlog) are critical for exploratory data analysis. They make it possible to run algorithms like PCA or clustering, which assume that variance is constant across the data.

Plugging transformed data into DESeq2 is a common mistake that invalidates the statistical test. On the other hand, running a PCA on normalized-but-not-transformed counts will give you a plot dominated by your most highly expressed genes, hiding the true biological relationships between your samples.

How Do I Know if My Normalization Was Successful?

You shouldn't just run the code and hope for the best. There are a few simple visual checks you can—and should—perform to get confidence that your normalization worked as expected.

First, make boxplots of the log-transformed counts for each of your samples. Before normalization, you'll probably see that the boxes are all over the place, reflecting the different library sizes. After a successful normalization, those boxplots should look much more aligned, confirming that you’ve corrected for sequencing depth.

Your other best friend here is a PCA plot. It’s not uncommon to see unnormalized data cluster by technical variables, like which batch the samples were sequenced in. After proper normalization and transformation, your samples should cluster by their true biological groups (e.g., your treatment vs. control). When that happens, you can be much more confident that the signals you're seeing are real.

At NeuroDex Inc., we embed these rigorous QC and normalization steps into every clinical trial project. This ensures the NDE-derived biomarker data we deliver is clean, dependable, and ready to guide critical decisions. Our end-to-end platform empowers pharmaceutical partners to move forward with confidence in their neurotherapeutic programs. Discover how we can accelerate your research at https://neurodex.co.

Leave a Reply